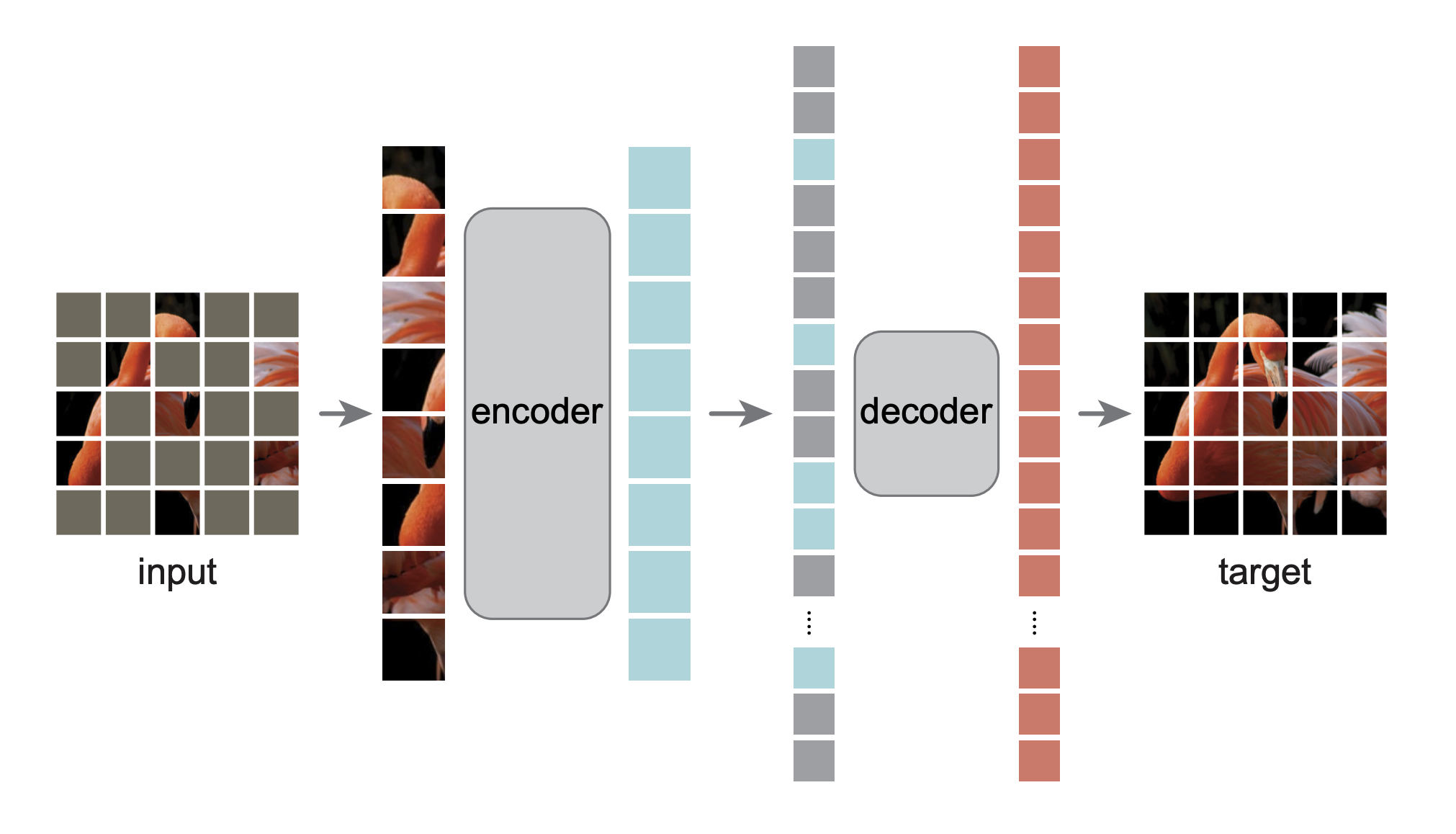

Masked Autoencoders Are Scalable Vision Learners

Kaiming He, Xinlei Chen, Saining Xie, Yanghao Li, Piotr Dollár, Ross Girshick

This is the OneFlow re-implementation of MAE based on LiBai.

- MAE pretraining code

- MAE finetune code

Based on libai.layers, MAE model is automatically configured with the following parallelism mode.

| Model | Data Parallel | Tensor Parallel | Pipeline Parallel |

|---|---|---|---|

| MAE pretrain | ✔ | - | - |

| MAE finetune | ✔ | ✔ | ✔ |

Please see LiBai Installation to install LiBai

Please see Prepare the Data.

Pretraining MAE on 8 GPUs using data parallelism.

cd /path/to/libai

bash tools/train.sh projects/MAE/train_net.py projects/MAE/configs/mae_pretraining.py 8- Setup the weights for finetuning in mae_finetune.py as follows:

# mae_funetune.py

finetune.enable = True # only load weight if enable is True

finetune.weight_style = "oneflow" # Set "oneflow" for loading oneflow checkpoints

finetune.path = "/path/to/checkpoint" # the checkpoint directoryIf you feel confused about the checkpoint format here, please refer to Load and Save a Checkpoint in LiBai for more details.

- Finetune MAE on 8 GPUs using data parallelism.

cd /path/to/libai

bash tools/train.sh projects/MAE/train_net.py projects/MAE/configs/mae_finetune.py 8Notes: if you want to finetune MAE models using different parallel strategies, please refer to the Distributed Configuration Tutorial

Evaluate MAE model under LiBai on 8 GPUs:

cd /path/to/libai

bash tools/train.sh projects/MAE/train_net.py projects/MAE/configs/mae_finetune.py 8 --eval-onlyYou can download pytorch pretrained weight from MAE official repo and finetune them in LiBai by updating the mae_finetune.py as follows:

finetune.enable = True # only load weight if enable is True

finetune.weight_style = "pytorch" # Set "pytorch" for loading torch checkpoints

finetune.path = "/path/to/mae_finetuned_vit_base.pth"Run finetuning on 8 GPUs:

cd /path/to/libai

bash tools/train.sh projects/MAE/train_net.py projects/MAE/configs/mae_finetune.py 8@article{he2021masked,

title={Masked autoencoders are scalable vision learners},

author={He, Kaiming and Chen, Xinlei and Xie, Saining and Li, Yanghao and Doll{\'a}r, Piotr and Girshick, Ross},

journal={arXiv preprint arXiv:2111.06377},

year={2021}

}